The fastest reasoning LLMpowered by diffusion

Todaywe're introducing Mercury 2 — the world's fastest reasoning language modelbuilt to make production AI feel instant.

Why speed matters more now

Production AI isn't one prompt and one answer anymore. It's loops: agentsretrieval pipelinesand extraction jobs running in the background at volume. In loopslatency doesn’t show up once. It compounds across every stepevery userevery retry.

Yet current LLMs still share the same bottleneck: autoregressivesequential decoding. One token at a timeleft to right.

A new foundation: Diffusion for real-time reasoning

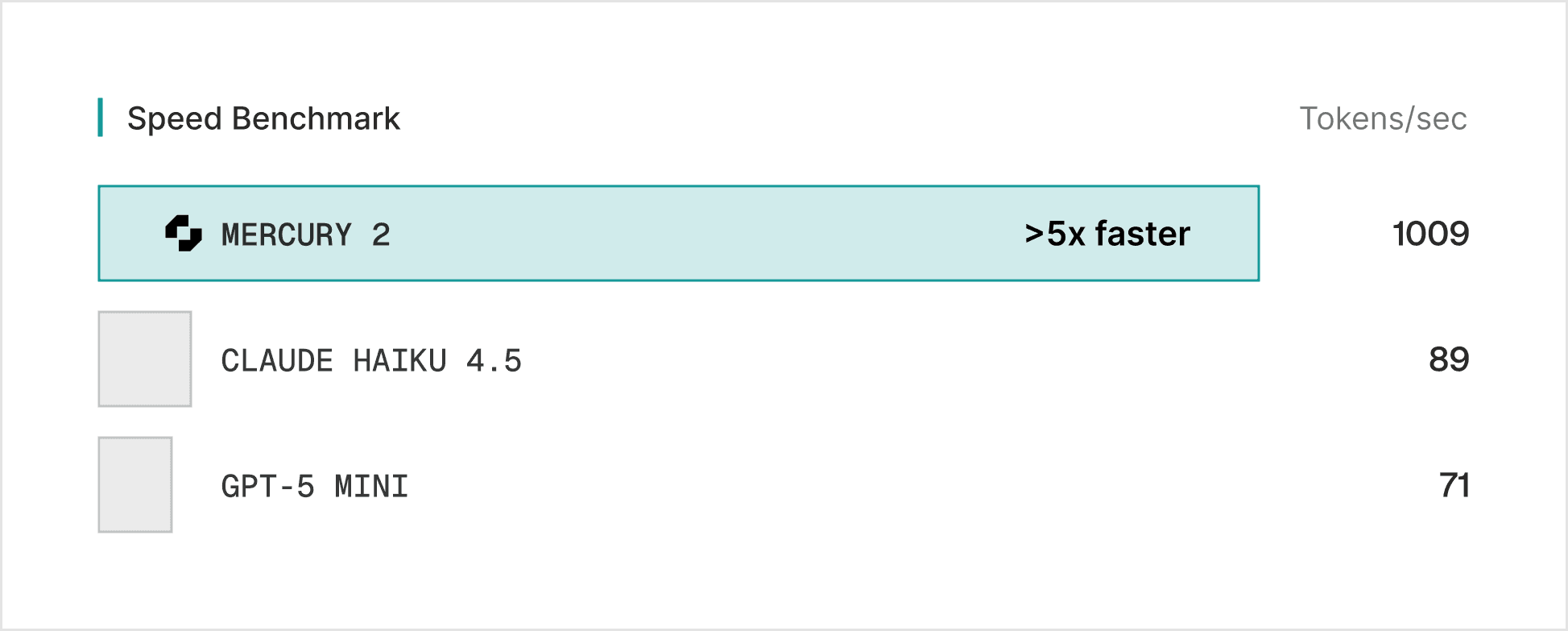

Mercury 2 doesn't decode sequentially. It generates responses through parallel refinementproducing multiple tokens simultaneously and converging over a small number of steps. Less typewritermore editor revising a full draft at once. The result: >5x faster generation with a fundamentally different speed curve.

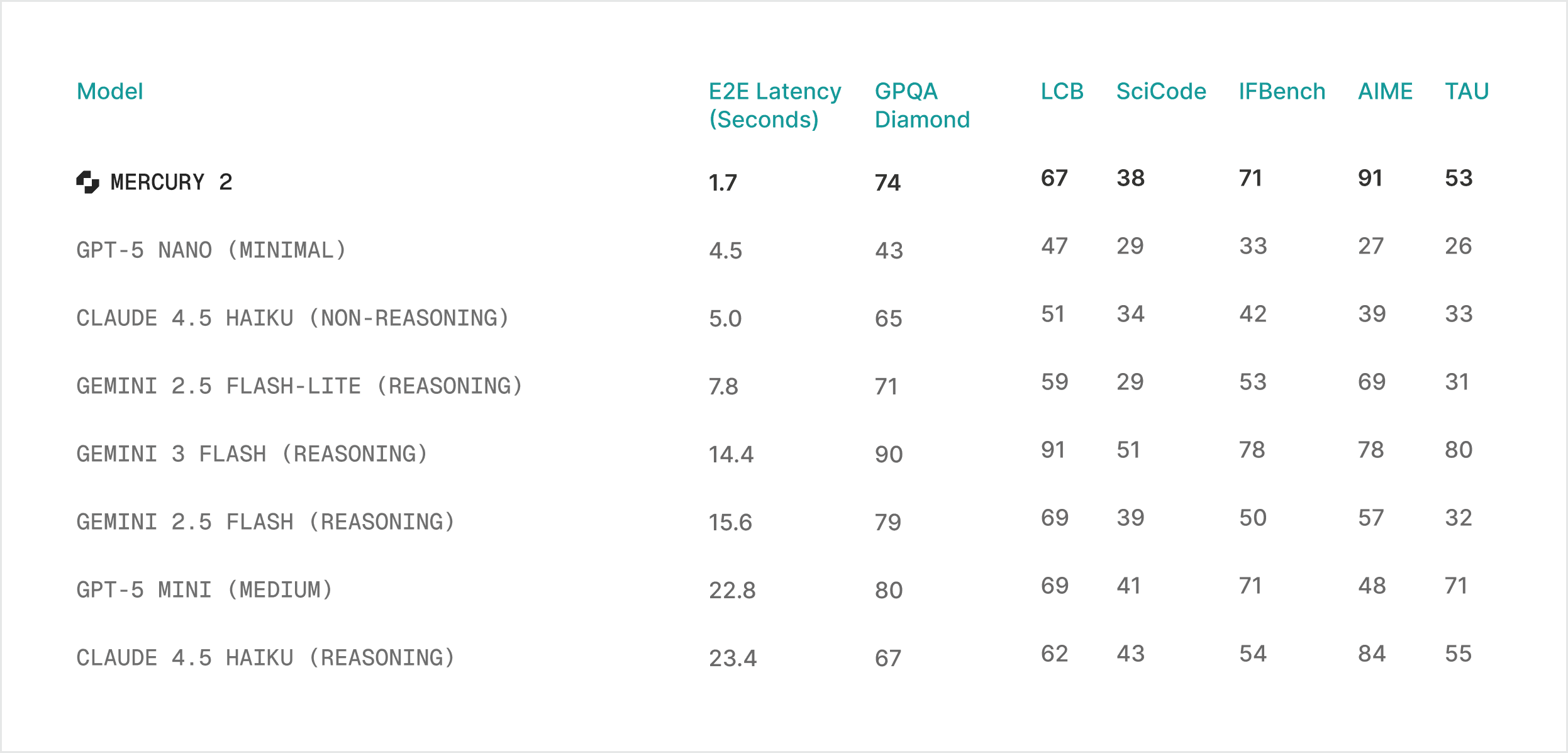

That speed advantage also changes the reasoning trade-off. Todayhigher intelligence means more test-time compute — longer chainsmore samplesmore retries — bought at the direct expense of latency and cost. Diffusion-based reasoning gets you reasoning-grade quality inside real-time latency budgets.

Mercury 2 at a glance

Mercury 2 shifts the quality-speed curve for production deployments:

Speed: 1,009 tokens/sec on NVIDIA Blackwell GPUs

Price: $0.25/1M input tokens · $0.75/1M output tokens

Quality: competitive with leading speed-optimized models

Features: tunable reasoning · 128K context · native tool use · schema-aligned JSON output

We optimize for speed users actually feel: responsiveness in the moments users experience - p95 latency under high concurrencyconsistent turn-to-turn behaviorand stable throughput when systems get busy.

“Inception’s Mercury 2 demonstrates what’s possible when new model architecture meets NVIDIA AI infrastructure. Surpassing 1,000 tokens per second on NVIDIA GPUs underscores the performancescalabilityand versatility of our platform to power the full spectrum of AI workloads.”

Shruti KoparkarSenior Manager of ProductAccelerated Computing Group at NVIDIA

What Mercury 2 unlocks in production

Mercury 2 excels in latency-sensitive applications where the user experience is non-negotiable.

1. Coding and editing

Autocompletenext-edit suggestionsrefactorsinteractive code agents - workflows where the developer is in the loop and any pause breaks flow.

“Suggestions land fast enough to feel like part of your own thinkingnot something you have to wait for.”

Max BrunsfeldCo-FounderZed

2. Agentic loops

Agentic workflows chain dozens of inference calls per task. Cutting latency per call doesn't just save timeit changes how many steps you can afford to runand how good the final output gets.

“We’re now leveraging the latest Mercury model to intelligently optimize campaign execution at scale. By surfacing insights and dynamically enhancing delivery in real timewe’re driving stronger performancegreater efficiencyand a more resilientAI-powered advertising ecosystem. This advancement reinforces our commitment to autonomous advertisingwhere intelligent systems continuously refine execution to deliver measurable outcomes for our clients.”

Adrian WitasSVPChief ArchitectViant

“We’ve been evaluating Mercury 2 because of its unparalleled latency and qualityespecially valuable for real time transcript cleanup and interactive HCI applications. No other model has come close to the speed Mercury can provide!”

Sahaj GargCTO & Co-FounderWispr Flow

"Mercury 2 is at least twice as fast as GPT-5.2which is a game changer for us."

Suchintan SinghCTO & Co-FounderSkyvern

3. Real-time voice and interaction

Voice interfaces have the tightest latency budget in AI. Mercury 2 makes reasoning-level quality viable within natural speech cadences.

“We build lifelike AI video avatars that hold real-time conversations with real peopleso low latency isn't a nice-to-haveit's everything. Mercury 2 has been a big unlock in our voice stack: fastconsistent text generation that keeps the whole experience feeling natural and human.”

Max SapoCEO & Co-FounderHappyverse AI

“Mercury 2 quality is excellentand the model’s low latency enables more responsive voice agents.”

Oliver SilversteinCEO & Co-FounderOpenCall

4. Search and RAG pipelines

Multi-hop retrievalrerankingand summarization latencies stack fast. Mercury 2 lets you add reasoning to the search loop without blowing your latency budget.

“Our partnership with Inception makes real-time AI for our search product practical. Every SearchBlox customeracross customer supportcomplianceriskanalyticsand e-commercebenefits from sub-second intelligence across all of their data.”

Timo SelvarajChief Product OfficerSearchBlox

Get started

Mercury 2 is available now.

Mercury 2 is OpenAI API compatible. Drop into your existing stack - no rewrites required.

If you’re doing an enterprise evaluationwe’ll partner with you on workload fiteval designand performance validation under your expected serving constraints.